While there’s less stigma attached to depression than there used to be, it’s still not always accepted or people have a hard time understanding it.

In the last six months I’ve talked to a lot of people that don’t understand what chronic depression is like. This includes giving a talk at the USC film school to graduate and undergraduate students about being a creative and dealing with depression (Thanks Norman Hollyn!). I attended a funeral for a friend who committed suicide about six months ago and last week an uncle of a co-worker killed himself. Even at my friend’s funeral, someone giving a speech saying, ‘he was bi-polar, but it wasn’t like he was depressed and down-and-out’. As if being depressed and acting like a derelict were the same thing.

This blog post is:

1) an attempt to give folks that don’t deal with chronic depression a better understanding of it, how it manifests and, maybe, what to do about it (both as a sufferer and someone that cares about someone suffering).

2) I know that many people who identify as ‘creative’ struggle with similar issues and I want you to know you are not alone. It’s a lonely disease, we isolate ourselves and feel isolated by it. Nevertheless, you are not alone.

And 3) I want to start the discussion both for those suffering and those trying to understand and help those suffering. It doesn’t help anyone to not talk about it. Let’s de-stigmatize it.

My Struggle

I’ve struggled with depression and suicidal thoughts for almost 40 years, since my early teens. Please realize this post is talking from my own experience, what I’ve learned from therapists and what’s worked for me. I’m not a therapist. If you suffer from depression it’s usually very beneficial to see a therapist or psychologist. It’s really important you have help. I also encourage those of you who are therapists, or if you have struggled with depression to talk about your experiences and what’s been helpful (or not) for you. Please post in the comments!

Let’s start off by attempting to talk about what it’s like to be depressed. Or at least how it manifests for me. Everyone is different but my experience can give you some insight into the disease.

On a daily basis, as I have had for almost as long as I can remember, I have a voice inside me telling me I’m worthless, unloveable and that life is not worth living. All the time. Most of the time, that voice is just barely audible background noise, easily dismissed. But on some days it’s the sound and fury of a hurricane. On those days suicide becomes a tangible thing. I’ll talk more about that in a moment.

The rest of the time, dismissing the voice takes time and energy. It can suck the joy out of successes and it magnifies failures. It is a weight that I constantly struggle against. This is despite the fact that I have what most people would consider a pretty good life.

I’m fully aware I’m blessed… I run a successful company that I started, I have much love and support around me, a good partner. And yet…

The awareness that I have so much to be grateful for often makes it harder. On top of the depression, guilt and shame are piled on for knowing that I have all these good things yet I’m still depressed. The depression becomes like teflon. Rationally I’m aware of the love and support around me. I know such things exist. But they roll off the darkness like beads of water, unable to be absorbed to the depths where they would help. The feelings can’t be internalized.

I know I SHOULD be grateful but I can’t manifest it. Which just increases the frustration and pain.

I realize all this sounds pretty bleak. Probably bleaker than it actually is a lot of the time. Remember that often the thoughts are mostly background noise. They definitely have a bit of a dampening effect but I can still feel happy or joyful or neutral or whatever. I don’t usually have a problem moving through the world like everyone else. That said, on the bad days, the above description doesn’t come close to capturing the depths of the darknesses. How dark the thoughts have to be to make suicide a viable option. But it can get there.

So what should you do?

If you want to help someone that’s deeply depressed, perhaps even suicidal, you have to meet the person where they’re at, NOT where you want them to be. Even if they say they’re suicidal. Accept that depression is an illness and hear them out. LISTEN to them. Acknowledge what they are feeling. Make them feel heard. Make them feel loved… by listening, by asking gentle questions (how did that make you feel? Why do you think it affected you like that? Is there anything that would make it better?, etc.), by making time for them, by being non-judgemental. Let them tell their story. But also be part of the conversation. Don’t just ruminate with them. Try to move the conversation forward.

However, it may be hard to get them to engage. Realize that there’s a lot of non-verbal things happening… Depression is more, and perhaps much more, something you feel in your body than something that’s in your head. So hugs without words are sometimes the best things. Offer to go out and get them their favorite food or bring them soup. Of course, you can just ask them what they need.

You’re not going to solve it. All you can do is support them in solving it for themselves.

If they are suicidal, you need to accept the fact that suicide is a viable option. Just because you don’t want it to happen doesn’t mean it can’t or won’t happen. If someone believes suicide is an option and you tell them that it’s not, you’re making it more likely. You’re invalidating their opinion, invalidating what they’re feeling. By doing so you’re confirming that they mean nothing. And, again, be careful about how you tell them what they have to live for. They are probably very well aware of the things that they _should_ feel grateful for.

In truth, if you suspect someone is depressed you should consult a therapist. I am not a therapist. I’m just relating my own struggle with chronic depression, and every person’s struggle is different. Everyone’s reasons for being depressed are different… in many cases, it’s not chronic but event driven (a divorce, death, getting fired, etc.). Listening is always a good strategy but a therapist will be able to offer better advice for the exact situation.

The other thing to know is that often those of us that have dealt with depression for a long time are good at putting a brave face on it. It may not be obvious we’re depressed. Which is why suicide often comes as a shock. Just because outwardly someone is successful and seems to have it together doesn’t mean they aren’t suffering and struggling underneath it all. In a lot of case, it’s up to the depressed person to realize they are not alone and that they can get help.

If YOU struggle with depression…

This is a lonely and difficult struggle. Particularly when you’re younger and you’re still learning what it is and what might help but it’s difficult at any age. You have to find the strength of will to pull yourself out of it enough to either help yourself or reach out and take the hands of those offering to help.

As mentioned, see a therapist or psychologist. It really does help to talk things out. Often a therapist can help you see things and patterns you can’t see for yourself.

One of the important things is to get out of the house. If you can at least find the strength to go be depressed in a park, a makerspace, gym, mall, whatever… you’ll find it helps. Go somewhere and do something you enjoy. Especially if you can connect with a friend, but I’ve found just being in a place where there are other people helps. If lack of people works better at least try to not just stay in bed or on the couch. Take a walk in a secluded park or something.

Connect with people. Even though it seems like no one cares, you’ll find if you reach out, you have friends who do care and will help.

There are other things that can help as well. They tend to be somewhat different for each person but it’s important to find what those things are. For some people it’s art or music or just sitting in the sun. Meditation can also be a form of therapy, especially with a good teacher.

I think many creatives forget why they started doing art in the first place. Make sure you’re creating art outside of your job. Doing art you love just for the sake of the art. It can be a huge outlet and expression of what you’re feeling. It really is important to make time for it.

For myself, exercise, particularly yoga these days, has always been the best anti-depressant. However, as I’ve gotten older and injuries more frequent, I’ve come to rely on anti-depressant medications a bit more. Getting injured is a double whammy… I get depressed about not being able to do something I love doing and, at the same time, my main coping mechanism for dealing with depression is taken away.

Medications are a mixed bag. Not all of them work and some can actually make things worse. So it’s important to monitor your state of mind when you initially start taking them. If it makes you feel worse stop immediately and consult your Psychiatrist. You may have to try a few different ones to find what works for you. However, after much resistance, I was finally convinced to start taking Cymbalta regularly (next generation Prozac-like drug). It’s actually been quite helpful. Who knew?

There is no easy answer.

What I’ve said here is meant to help and guide folks. However, it’s mostly based off of my personal experience. It is not the be all, end all. If you have other insights, please share them in the comments. I would love to hear other things that have worked for other people. We’re all different, men sometimes have different challenges than women, as do different age groups, etc., etc. There is not one solution.

Whatever the solution is, it requires work.

But it can’t hurt to talk about it and realize we’re not alone. To know that it’s ok to be depressed. It happens. It’s an illness and needs to be treated as such. If it’s chronic, then it comes and goes. Sometimes stronger, sometimes less so. By exploring meditation, seeing a therapist, taking medication or whatever works for you, hopefully we learn how to deal with it better over time. But even after almost 40 years and all the above things I’ve talked about… I still have incredibly dark days. I still have a voice that says I’m worthless and wants to drag me down. For myself and many people, this doesn’t just disappear.

As one of my therapists said… it’s like driving a bus. Those parts of you, those passengers, are on the bus whether you like it or not. At some point you have to accept the passengers. Once you accept them, you realize they are part of you, but they AREN’T you. They don’t define you. (it’s not easy to get to that realization and some days, you’re still going to believe that voice. It happens.)

So let’s talk. Be open about our experiences, what’s helpful, what’s not. Hopefully we can further de-stigmatizing depression and make everyone realize that sometimes asking for help is the most courageous thing you’ll ever do.

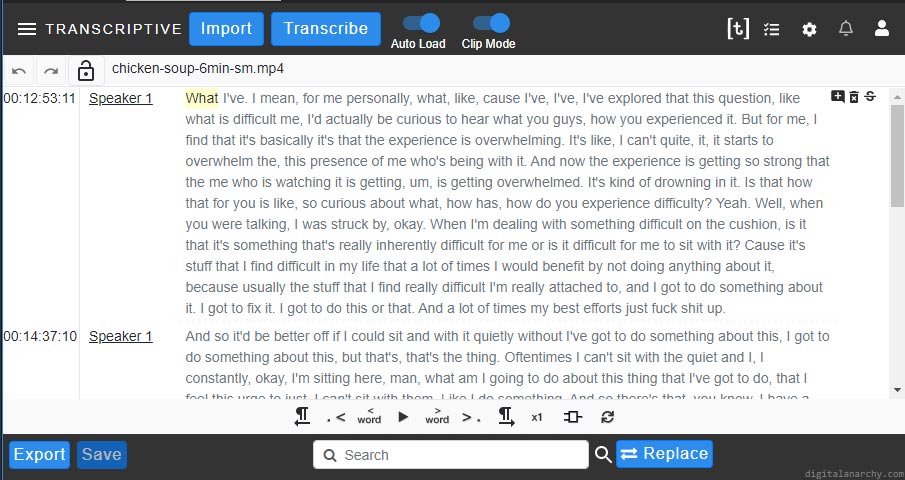

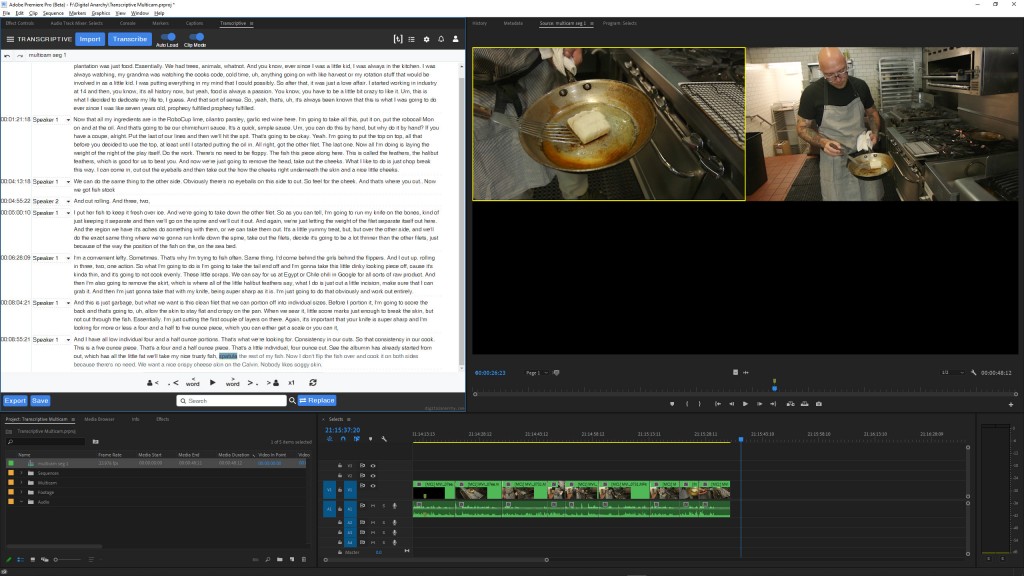

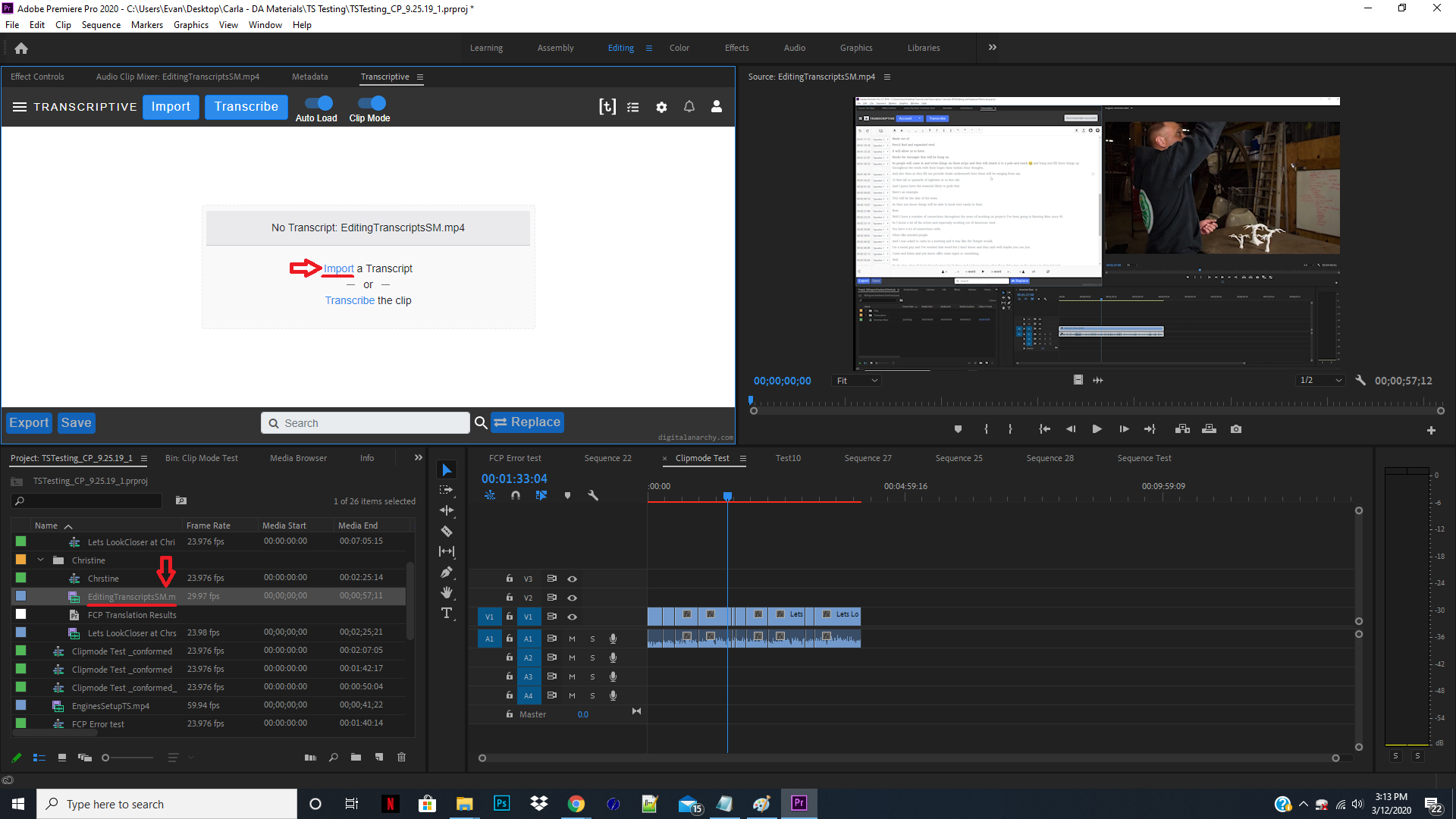

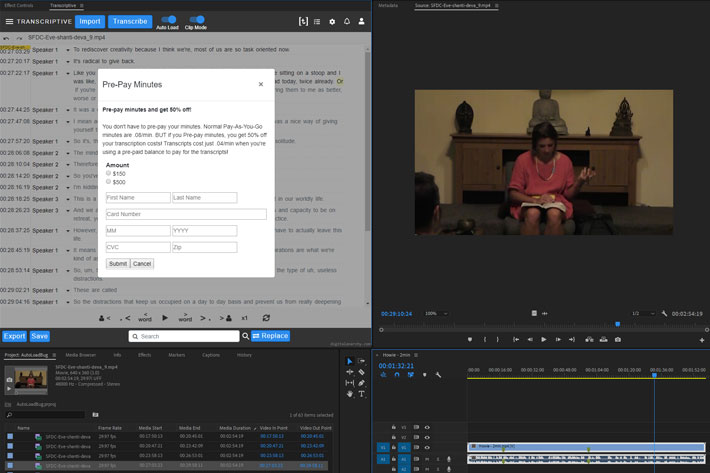

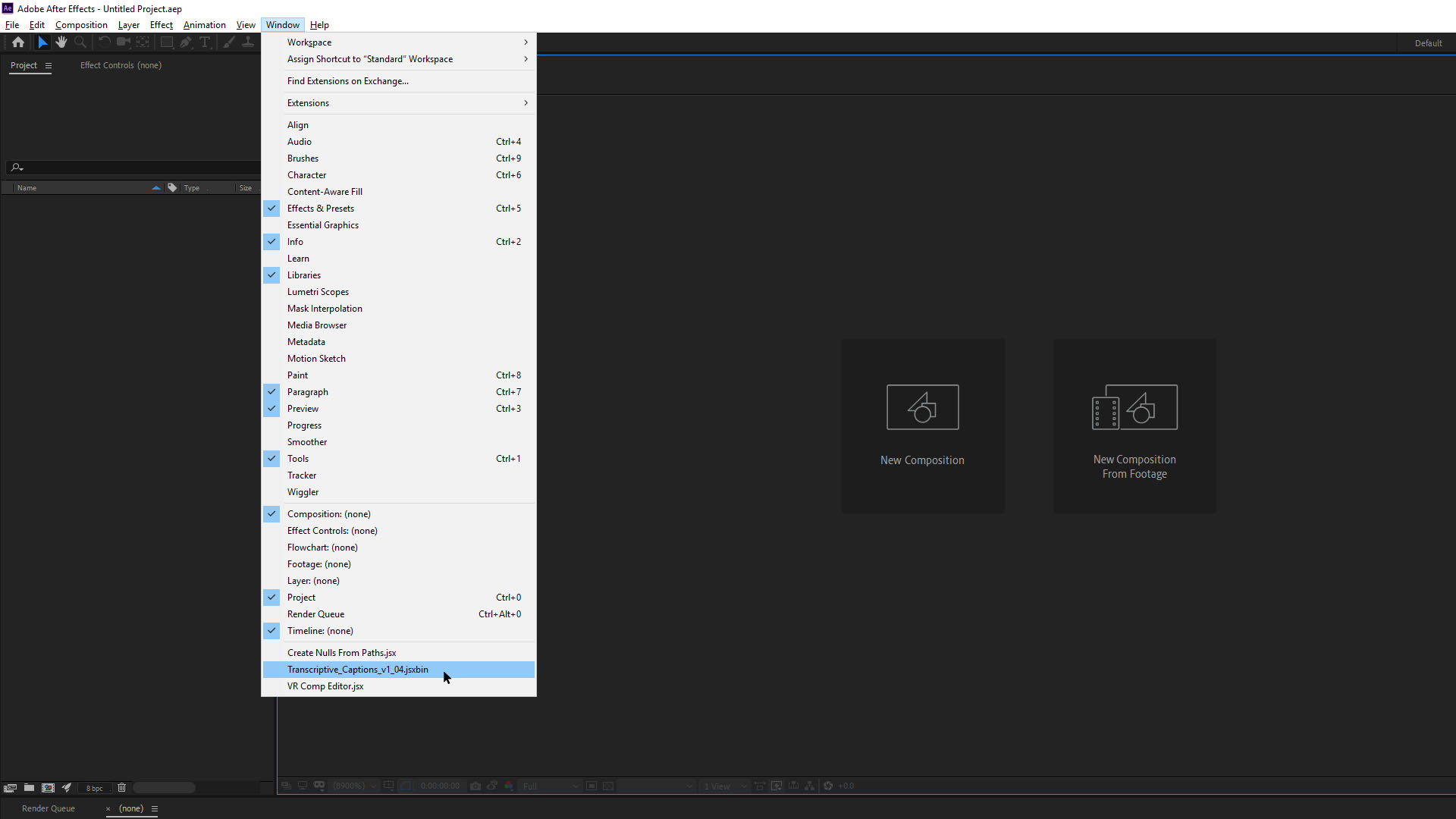

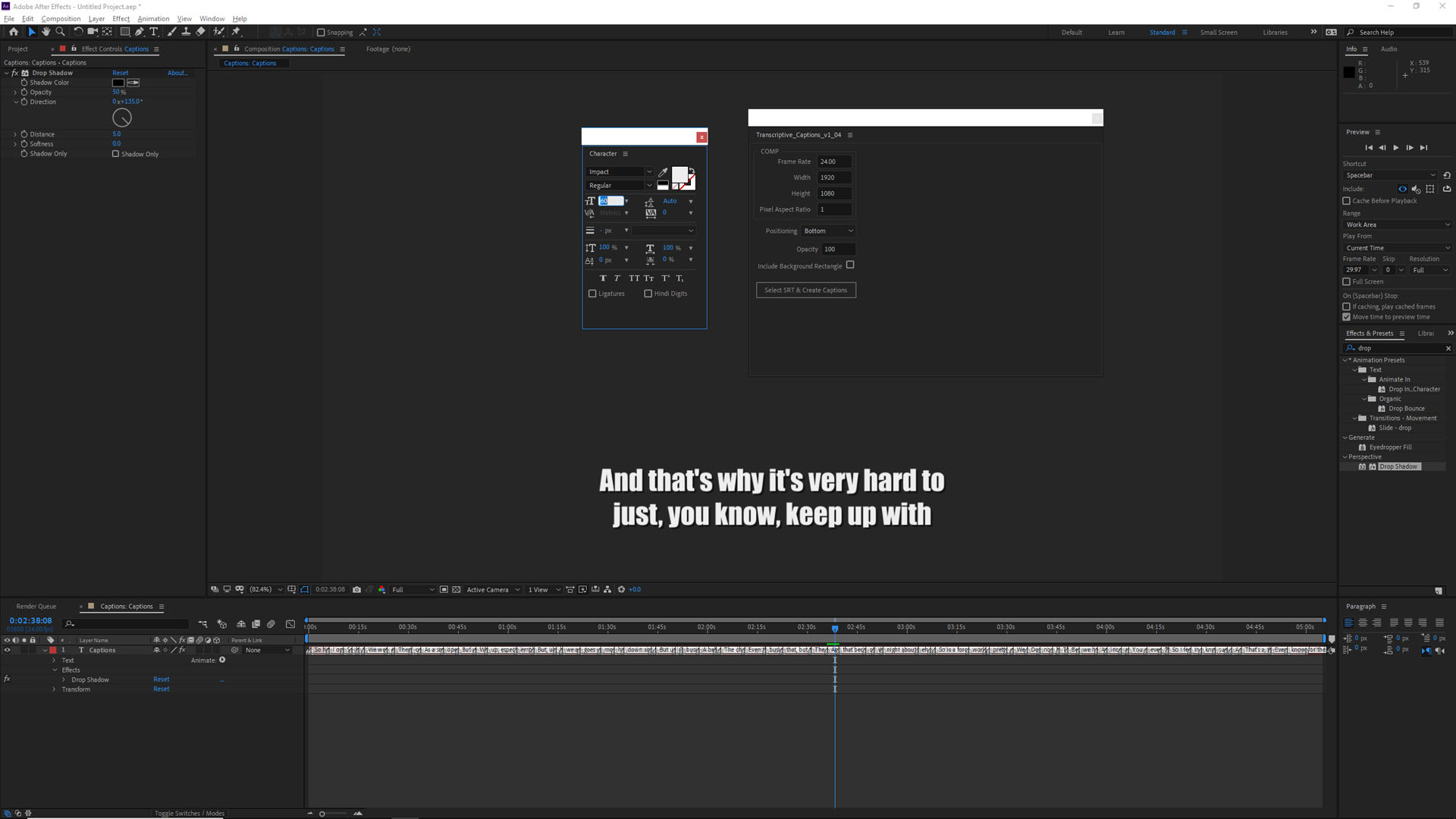

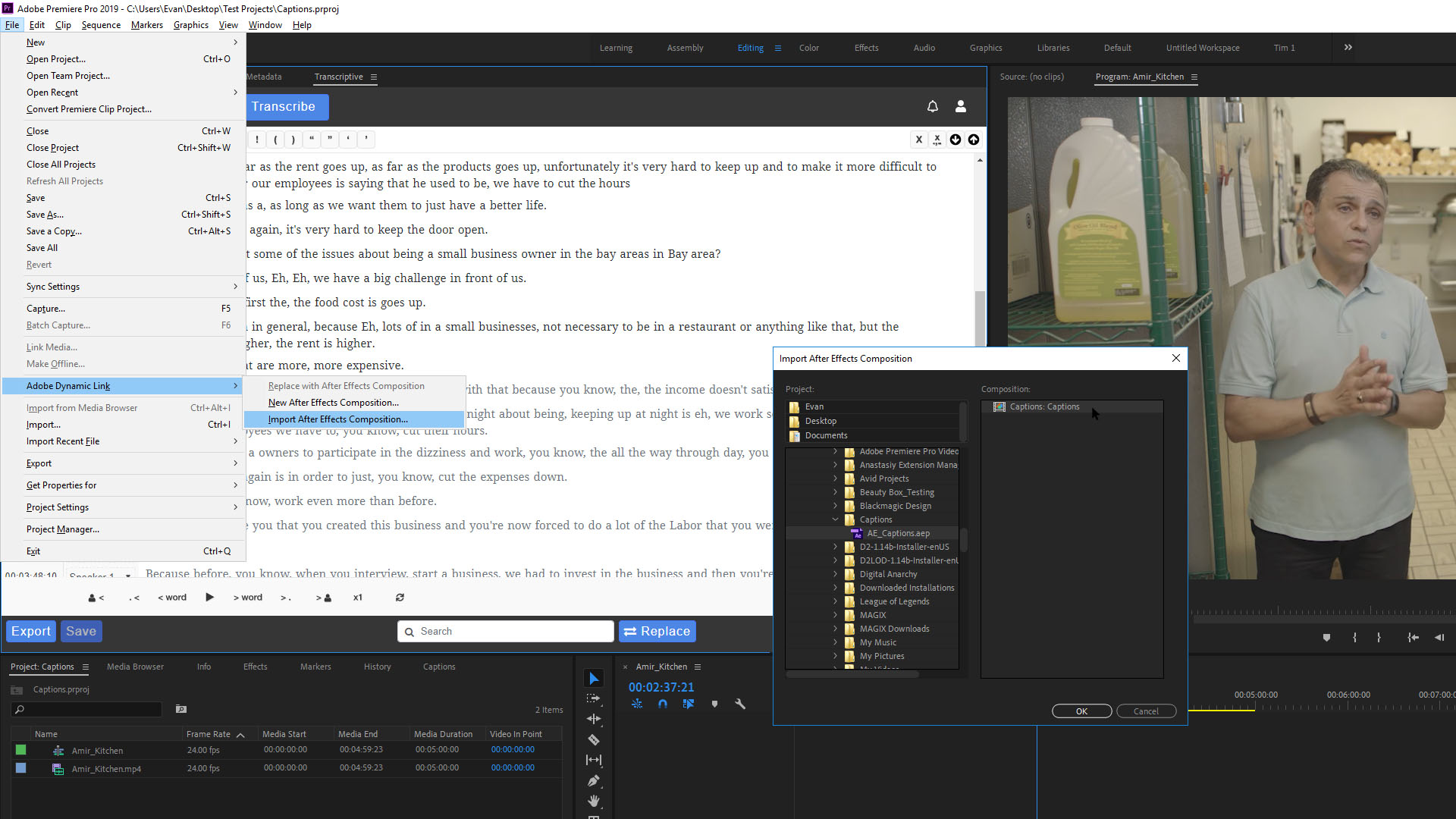

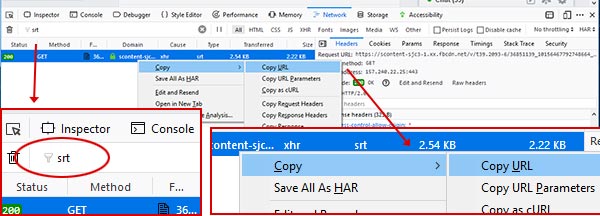

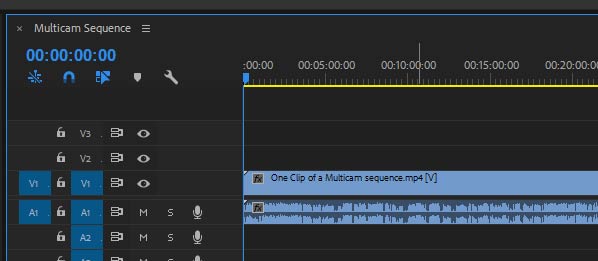

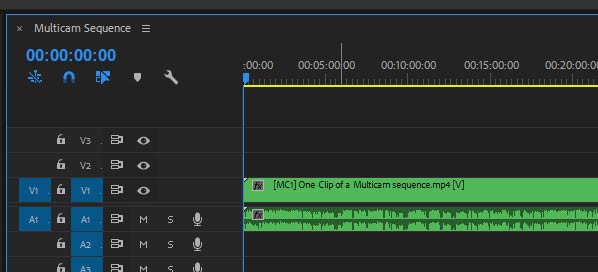

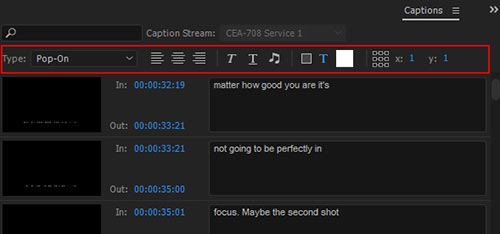

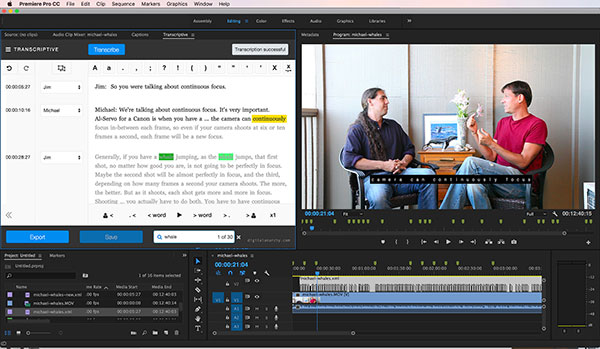

Search results in Premiere! Click to jump to that point in the media.

Search results in Premiere! Click to jump to that point in the media.

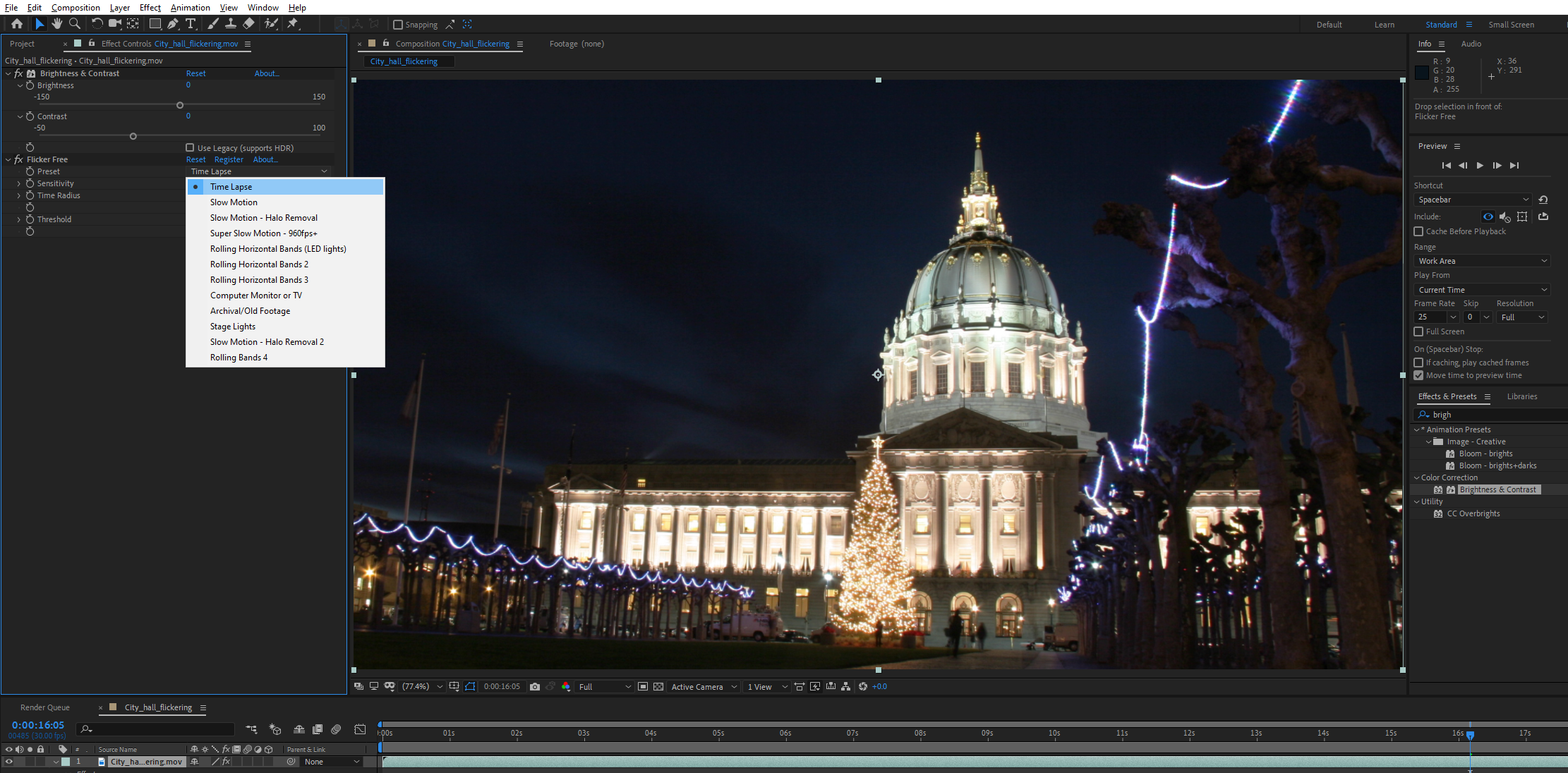

Most VR people with their headsets

Most VR people with their headsets